What is chat context size?

Chat context size determines how much information is sent to the AI every time a user asks a question. It includes the chatbot's role instructions (prompt), the current conversation history, and relevant training data retrieved from your knowledge base. More context typically leads to more accurate and detailed answers.

Benefits of larger context:

- Improved accuracy and relevance of responses

- Better understanding of longer conversations

- Enhanced ability to handle complex questions by referencing more training data

- When chat memory is enabled, information from previous conversations can be included for more personalized interactions

How to change the context size

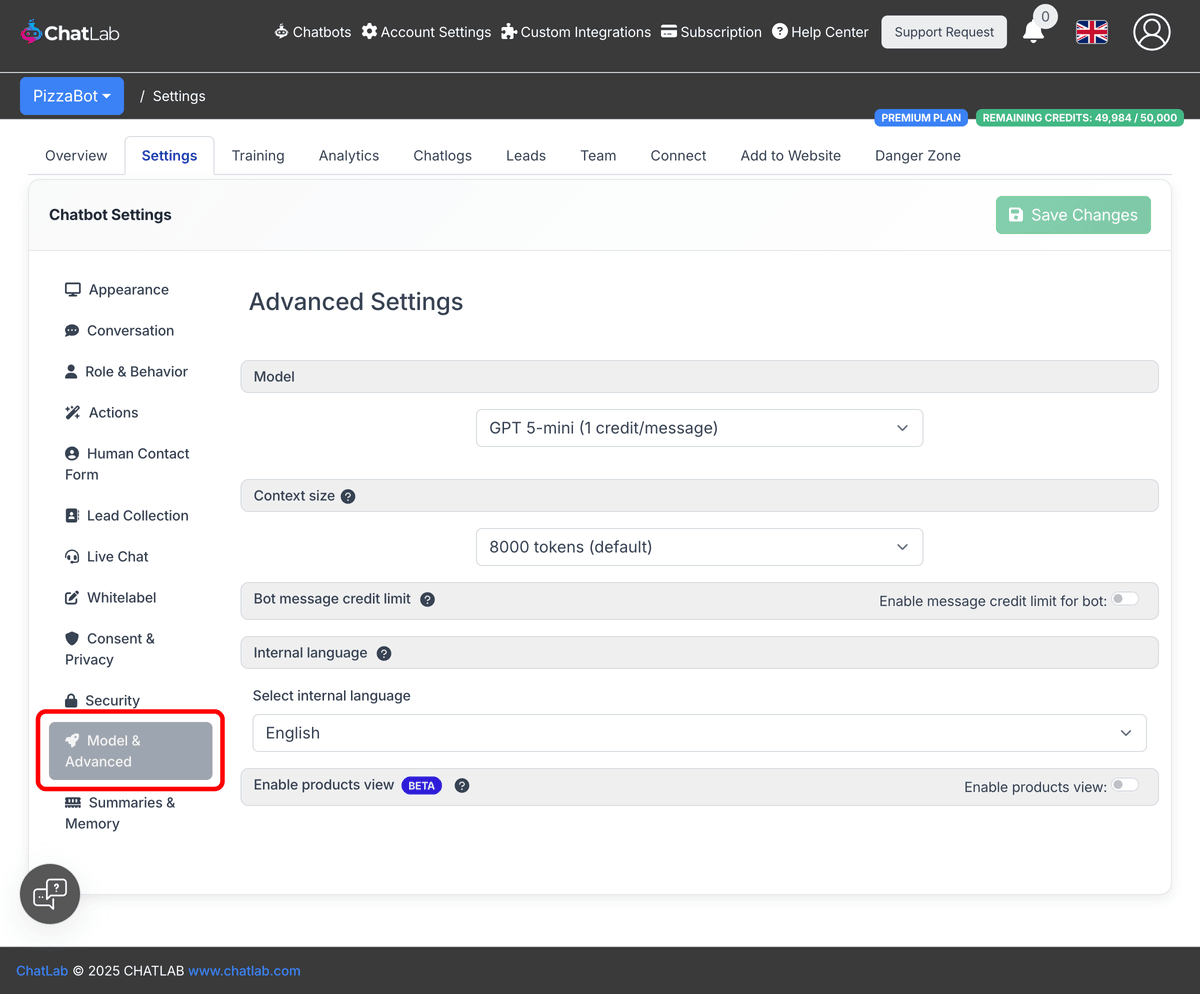

Select your chatbot, click the Settings tab, then select Model & Advanced in the left sidebar.

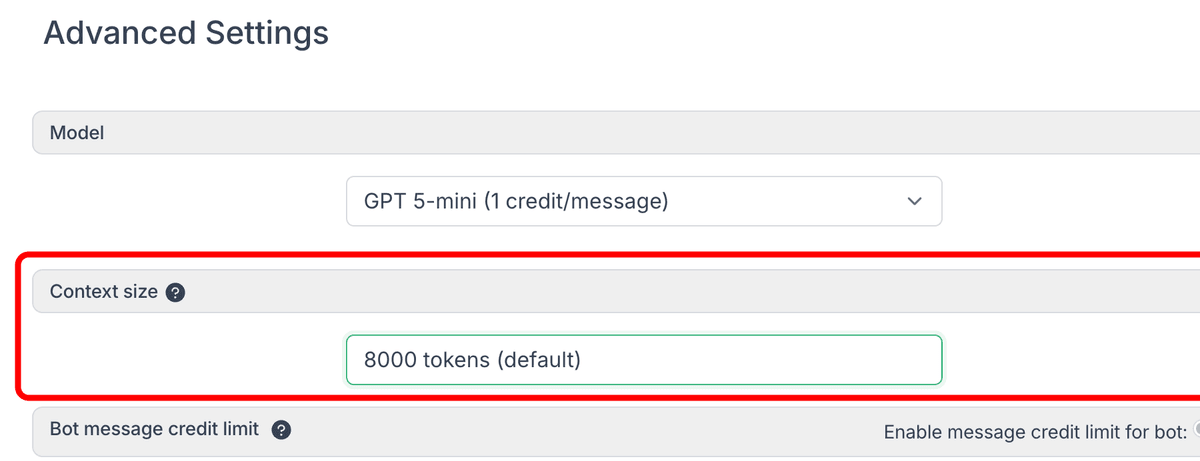

Find the Context size dropdown and choose your preferred option:

- 8,000 tokens -- default setting

- 16,000 tokens -- 2x message credits per message

- 32,000 tokens -- 4x message credits per message

How the context window is divided

The system reserves approximately 3,000 tokens for the chatbot's role instructions (prompt) and the current conversation. The remaining tokens are used for training data, which is dynamically selected based on the user's question.

- 8,000 tokens -- approximately 5,000 tokens available for training data

- 16,000 tokens -- approximately 13,000 tokens available for training data

- 32,000 tokens -- approximately 29,000 tokens available for training data

On average, one word equals approximately 1.3 tokens.

If you use chatbot memory, part of the context is also allocated to summaries and client profiles from previous conversations. The memory size slider in Settings > Summaries & Memory controls the split between memory and knowledge base data.

Credit cost per context size

The default context size is 8,000 tokens. Increasing it affects how many message credits each interaction consumes:

- 8,000 tokens -- 1x message credits (default)

- 16,000 tokens -- 2x message credits per message

- 32,000 tokens -- 4x message credits per message

Larger context provides better AI performance but consumes more message credits per interaction.